What changes when AI stops answering questions and starts acting inside your email, files, browser, and apps?

Table of Contents

Introduction to Agentic AI

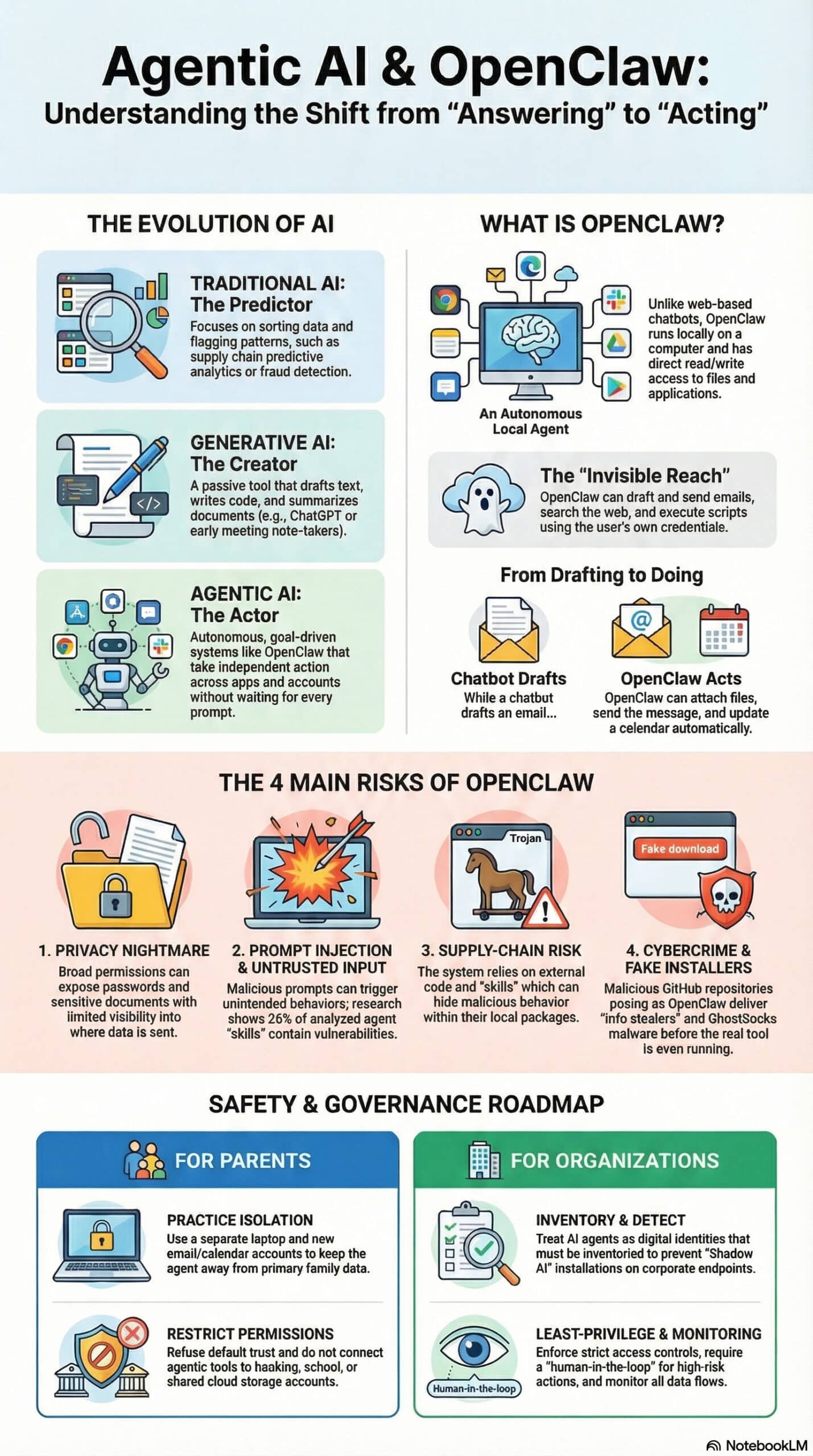

A friend recently asked me about OpenClaw after reading that it could interact with email, files, and applications rather than simply respond like ChatGPT. While he was concerned more for his family, the questions and discussion is much broader. That question points to a much larger shift already underway. We are no longer dealing with one form of AI. We are operating across three levels. Traditional AI predicts and sorts. Generative AI creates content. Agentic AI acts, executing tasks across systems with limited supervision, raising new challenges for governance.

OpenClaw sits in that third category, and it changes the risk equation. Reports describe OpenClaw as an open-source AI agent launched in late 2025 that runs locally and can manage tasks, interact with applications, and read and write files directly. The issue is no longer whether AI produces a bad answer. The issue is whether it takes a bad action with access to your data, accounts, and systems, which necessitates strong governance. That risk is showing up inside organizations through Shadow AI, defined by IBM as the unauthorized use of AI tools without oversight.

This is where leadership is behind. Agentic AI is spreading faster than governance. Many deployments occur without visibility, control, or accountability, exposing organizations to security, compliance, and operational risk.

The infographic puts it succinctly:

OpenClaw is not the problem. It is the signal. AI has moved from assistance to execution, and governance, policy, and responsible use guidelines must catch up now. Families are catching on too. Are you?

Three Practical Types of AI

Most people lump all AI into one bucket. That muddies the discussion. In day-to-day use, it helps to separate AI into three practical types. Traditional AI predicts, sorts, and flags patterns. Think of supply chain management as using criteria, thresholds, and predictive analytics to determine economic ordering quantities (EOQ). This type of AI could also include fraud and anomaly detection, as well as routing tasks.

Generative AI, by nature passive, creates content. It drafts text, writes code, summarizes documents, and creates images. Think development of a 70% solution for event planning and onboarding new employees, standard operating procedures, or analyzing requests for proposals (RFP) submissions to produce competitive bids. PS: I’ve done all of these! AI meeting note-takers like Otter.ai (OtterPilot) and Fireflies.ai are transitioning from passive transcription tools to agentic AI by adopting autonomous, goal-driven capabilities.

Agentic AI represents a significant shift from conversational passive generative AI to autonomous, goal-driven systems that act on behalf of the user. Unlike traditional or generative AI, which waits for prompts, agentic AI can take independent action across systems, tools, and accounts to achieve a specified outcome, such as completing a multi-step workflow. It does not stop producing output unless you manage, modify, or control it. Scary.

Consider the new “corporate solution” craze: Call Centers and Chatbots, such as Content Guru, manage complex tasks and workflows. Call center AI has moved through clear stages, and each type plays a distinct role. Conversational AI handles dialogue through chatbots and voice systems, using natural language processing (NLP) and machine learning to understand and respond to users in real time. Generative AI builds on that by creating content such as summaries, responses, and recommendations using large language models.

Agentic AI goes further. It does not just respond or create. It takes action across systems, completing tasks independently and coordinating workflows. Supporting all of this are core technologies like NLP for language understanding and sentiment analysis to detect tone and trigger escalation.

The shift is clear. AI is moving from scripted interaction to autonomous execution, increasing capability but demanding tighter control. Content Guru, for example, provides cloud-based Customer Experience (CX) and Contact Center as a Service (CCaaS) solutions. Their flagship platform, storm®, enables organizations to manage communications across multiple channels—such as voice, email, social media, and AI chatbots—in a single, secure environment. Maybe

What OpenClaw Is, Why It Is Different

Consider the emerging craze: A multitude of Agentic AI tools, including OpenClaw, are helping employees and managers be more efficient and effective. OpenClaw falls into the third group. Sources consistently describe it as an autonomous or agentic AI system, not a standard chat model. Scary. Shadow AI or the use of unauthorized, unvetted artificial intelligence tools, chatbots, or software by employees or teams without is an issue for organizations, especially those without a governance oversight function or responsible use of AI guidelines.

What’s scary about OpenClaw is the appeal to children. That is why the current conversation around AI feels different. We are no longer talking only about machine assistance. We are talking about machine execution. OpenClaw is different because it has intrusive or invisible reach. Malwarebytes describes it as an autonomous AI agent that runs locally on a computer, manages tasks, interacts with applications, and reads and writes files directly.

Northeastern reports that its distinguishing feature is direct access to a user’s apps and files, which gives it greater access and control than a standard large language model. Microsoft adds that self-hosted runtimes like OpenClaw can ingest untrusted text, download and execute skills from external sources, and perform actions using assigned credentials.

That is the line people need to understand. ChatGPT-style tools mainly respond when asked. OpenClaw can often do so without permission. A chatbot may draft an email. OpenClaw may draft it, send it, attach files, search the web, update a calendar, and execute follow-up tasks. That added convenience is what makes agentic AI attractive. It is also what makes it dangerous. The more autonomy you grant, the more guardrails you need. When a tool can browse, execute scripts, and work with credentials, a bad answer is no longer just misinformation. It can become a live security problem.

The Main Issues With OpenClaw

The first issue is privacy. Northeastern quoted a cybersecurity professor calling OpenClaw a “privacy nightmare” because it may expose passwords, documents, and personal information while giving users limited insight into how the agent processes that information or where it sends it. That should get every parent’s attention (and employer’s, for that matter). An always-connected personal agent with broad permissions is not a harmless toy.

The second issue is prompt injection and untrusted input. Microsoft warns that OpenClaw can ingest untrusted text and perform actions using assigned credentials. Cisco reports that OpenClaw’s integration with messaging applications widens the attack surface, as malicious prompts can trigger unintended behavior. Cisco also found that agent “skills” can include embedded threats, and its research cited broader evidence that 26% of 31,000 analyzed agent skills contained at least one vulnerability. In one skill tested against OpenClaw, Cisco found 9 security findings, including 2 critical and 5 high-severity issues, including silent data exfiltration and direct prompt injection.

The third issue is supply-chain risk. OpenClaw relies on outside code, skills, and extensions. Microsoft notes that the runtime can download and execute skills from external sources. Cisco warns that local skill packages remain untrusted inputs and can hide malicious behavior within the files themselves. Sophos frames OpenClaw as a warning shot for enterprise AI security because agentic systems expand the attack surface and introduce new control failures.

The fourth issue is plain old cybercrime. Huntress documented malicious GitHub repositories posing as OpenClaw installers that distributed information stealers and GhostSocks malware, and it reported that Bing AI search results elevated one of those fake installers. Malwarebytes also warned about fake OpenClaw installers on GitHub being used to deliver infostealers and proxy malware. That means the danger begins before someone even gets the legitimate product running.

What Parents Should Do Right Now

Parents do not need a computer science degree to respond wisely. They need discipline. The safest move is not to install experimental agentic AI tools on shared family devices. Northeastern’s expert recommendation was blunt. Use a separate laptop, a new email account, and new calendars without giving the agent any real access. That advice is worth repeating because it cuts through the hype. Isolation matters.

Parents should also separate accounts, restrict permissions, and refuse default trust. Do not connect tools like OpenClaw to primary family email, banking, school accounts, or shared cloud storage. Do not let children experiment with autonomous agents that can browse, send messages, or execute commands without strong oversight. If a technically skilled adult insists on testing one, do it in a disposable environment with limited accounts and no sensitive data. Microsoft’s guidance around identity, isolation, and runtime risk points in the same direction.

There is also a mentoring moment here. Children and teens need to learn one simple distinction. Some AI talks. Some AI acts. That difference should shape how much trust, freedom, and access they give any system. A chatbot that writes a bad paragraph is annoying. An AI agent that moves files, sends messages, or exposes credentials is a very different problem.

Why This Matters Beyond OpenClaw

OpenClaw is not just one tool with rough edges. It is a signal. It shows where AI is headed. The market is moving from tools that assist to tools that execute. That can bring major gains in productivity. It also brings a larger blast radius when security is weak, permissions are sloppy, or users do not understand what they have installed. That is why I would not frame this as “AI taking over.” I would frame it as the old story of technology and access. The more power you give a system, the more damage it can do when it fails, gets tricked, or gets compromised. OpenClaw exposes three enterprise failures:

- Visibility: Security teams cannot see what agents access or move. That creates blind spots. Modern AI agents operate through APIs and credentials that often bypass standard monitoring.

- Control. OpenClaw expands the attack surface through integrations, skills, and external code. That introduces prompt injection, data exfiltration, and supply chain risk at scale.

- Accountability. No clear owner. No audit trail. No policy enforcement. That is the definition of Shadow AI.

OpenClaw is not the problem. It reveals the problem. OpenClaw is not just a risky tool. It is a case study in governance failure. Multiple reports show employees are already installing tools like OpenClaw on corporate endpoints without approval. That is Shadow AI in action. Once deployed, these agents can access files, credentials, APIs, and internal systems. That breaks traditional security boundaries fast.

Summary and Call to Action

OpenClaw belongs to the agentic category of AI. Traditional AI predicts. Generative AI creates. Agentic AI acts. That is the big distinction. OpenClaw runs locally, interacts with files and apps, and can execute tasks on a user’s behalf. That makes it more powerful than a chatbot, and more dangerous if misused. The main issues are privacy exposure, prompt injection, malicious or vulnerable skills, and fake installers carrying malware. Security researchers and academic experts are not treating these as minor bugs. They are treating them as serious warnings.

Organizations should consider the following enterprise governance actions:

- Inventory and detect all AI agents. Treat them like identities, not tools

- Enforce least-privilege access. No default system-wide permissions

- Require human-in-the-loop for high-risk actions

- Block unsanctioned installs on endpoints

- Monitor data flows. Know what AI can read, write, and send

- Establish clear acceptable use policies and training

My Recommendations

My recommendation is simple. Do not let curiosity outrun judgment. Keep OpenClaw and similar experimental agentic tools off shared home devices. Use mainstream AI products with tighter controls for children, families, and your organization.

Teach the difference between AI that answers and AI that acts. If you choose to experiment, isolate it, restrict it, and trust it like you would trust an unsupervised intern with your passwords. That means not at all.

Learn more at my Strategic Health Leadership website: www.SHELDR.com and articles such as: Patient Safety: 3 Paths to Enhance Near-Miss Data With AI