Table of Contents

Oh My, Patient Safety, Leadership, and AI!

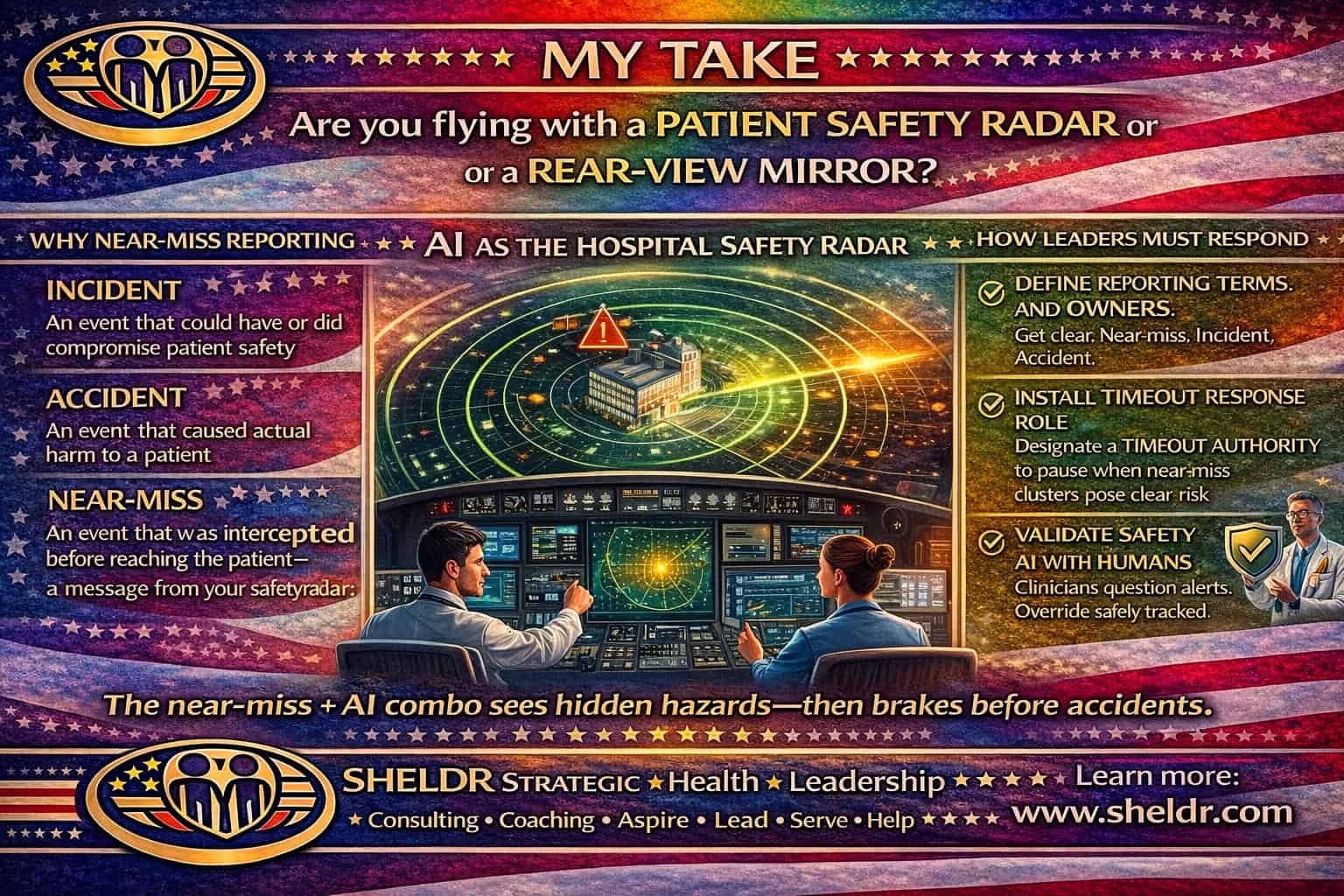

I keep coming back to a simple truth in patient safety. What leaders measure shapes what teams do. Your incident, accident, near-miss framing gives leaders a usable language. It also forces a decision. Do we learn before harm, or after harm?

I use a radar analogy on purpose. In aviation, crews do not wait for a crash report to improve flight. They run on radar, checklists, crew communication, and disciplined escalation. Healthcare needs the same posture. Near-miss reporting forms radar returns. It shows weak signals early, often before injury, death, or headline. A near-miss means the system almost failed, then got saved by luck, vigilance, or an informal workaround. Leaders can treat it as a gift or as noise. High-reliability organizations treat it as a gift.

Now add artificial intelligence. Leaders hear “patient safety artificial intelligence” and jump straight to prediction models. I start elsewhere. I start with sensing. Hospitals already generate vast streams of text, orders, monitors, imaging reports, handoffs, and incident narratives. Humans cannot scan all of it in real time. Artificial intelligence can. Used well, it becomes radar augmentation. Used poorly, it becomes a distraction generator.

This is where governance matters. JAMA Health Forum highlights patient safety risks and the need for lifecycle oversight in artificial intelligence use. I take a hard line. Safety work needs clear ownership, clear stop rules, and review capacity. No ownership means dashboards nobody trusts. No stop rules means everyone watches risk climb while throughput pressure wins. No review capacity means near-miss signals pile up until staff give up reporting.

Connect definitions to leader action

An incident can compromise safety. It may or may not cause harm. An accident causes harm. A near-miss gets intercepted before harm reaches the patient. In practice, near-miss reports often reveal weak points in communication, medication handling, labeling, staffing, device setup, and handoff logic. Leaders can mine near-misses for recurring patterns. Teams can redesign systems without the emotional weight of harm.

I see two common failure modes.

First, leaders treat reporting as compliance. They track counts, not learning. They send reminders, not fixes. Staff learn a lesson. Reporting changes nothing.

Second, leaders treat “technology” as the fix. They buy a tool, then bolt it on outside workflow. Staff get more clicks. Reporting falls. Trust falls.

A better path exists

Start with one high-risk workflow where near-miss reporting already happens. Medication safety works well, so does operating room site verification, so does falls. Define what a near-miss looks like in plain language. Build one fast loop. Capture. Review. Fix. Share. Protect the reporter. Repeat. Only then add artificial intelligence.

When artificial intelligence enters, leaders need to decide its role in the radar system.

- Role one. Signal detection. Natural language processing can scan free-text safety narratives, nursing notes, and order comments for recurring patterns. It can cluster “wrong dose almost given,” “look-alike packaging,” “order set confusion,” “handoff mismatch,” and “delayed escalation.” This supports safety staff by prioritizing review, not replacing review.

- Role two. Early warning from physiologic streams. Many patients deteriorate with subtle trends before crisis. An early warning tool can alert staff sooner, but only if leaders align staffing, escalation, and response protocols. A warning without response capacity becomes alarm fatigue.

- Role three. TIMEOUT AUTHORITY triggers. Your text raises time-out concepts in procedures. I extend it. TIMEOUT AUTHORITY means a named role can pause a process when risk exceeds a threshold. Artificial intelligence can help by flagging “high-risk profile plus high-risk context” patterns. The pause still belongs to clinicians and leaders. The tool only raises the flag.

This is where many organizations stumble. They treat regulatory clearance or vendor claims as permission for autonomy. Operational safety still requires local rules. Use case boundaries. Human-in-the-loop review. Monitoring for drift. Planned rollback paths. NPJ Digital Medicine work on translation and monitoring reinforces this. Clinical impact depends on workflow integration, evaluation, and post-deployment updating, not model performance alone.

Culture still remains the limiting factor

Your post emphasizes psychological safety and non-punitive systems. I agree. If staff fear punishment, they stop reporting. If leaders hunt for culprits, teams hide problems. If leaders ignore reports, staff feel used. Artificial intelligence cannot fix culture. It can amplify culture. In a fear culture, staff see artificial intelligence as surveillance. In a learning culture, staff see it as support.

So what should leaders do next?

I would pick one safety problem with clear harm asymmetry and high near-miss frequency. Medication near-miss detection fits. Build a “radar room” operating model. One cross-functional huddle each week with pharmacy, nursing, medical staff, safety, and informatics. Bring near-miss clusters. Decide one system fix. Implement within thirty days. Publish results to staff. Keep it simple.

Then add artificial intelligence as a second pair of eyes on reporting streams. Use it to prioritize review, not to close cases. Set rules for use. Document how the tool gets access, how privacy gets protected, how outputs get validated, and how errors get handled. Put TIMEOUT AUTHORITY in writing. Define who can pause deployment or disable the tool when risk rises.

Measure what matters

Do not measure “tool adoption.” Measure leading indicators tied to safety. Near-miss reporting volume may rise at first. Good. It signals trust. Then measure time to review, time to action, recurrence reduction, and accident reduction for the targeted workflow. Track false alarm rates for artificial intelligence alerts. Track clinician override rates. Use those signals to tune thresholds or disable features.

One last point

Near-misses often sit in “gray zones.” They look messy. They resist easy categorization. Artificial intelligence can help organize them, but leaders must resist the urge to automate away judgment. Judgment remains the safety core. Artificial intelligence remains radar. Leaders remain accountable.

If you want predictive patient safety, build the radar system first. Define events. Protect reporting. Staff review. Fix processes. Then add artificial intelligence as augmentation. Do not reverse the order.

Learn More

From my Website: 3 Unbreakable Rules for Leading Health AI Effectively

Ratwani RM, Bates DW, et al. AI and Patient Safety. JAMA. 2024. https://jamanetwork.com/journals/jama-health-forum/fullarticle/2815239

Bates DW, Levine D, et al. Potential of AI to improve patient safety. NPJ Digit Med. 2021 Mar 19;4(1):54. doi: 10.1038/s41746-021-00423-6. PMID: 33742085; PMCID: PMC7979747. https://pubmed.ncbi.nlm.nih.gov/33742085/

Vu V, Buléon C, et al. Changing minds, saving lives: how psychological safety transforms healthcare. BMJ. 2025.https://doi.org/10.1136/bmjoq-2024-003186

Feng J, Phillips RV, et al. Clinical AI QI: continual monitoring and updating of AI algorithms. NPJ Digit Med. 2022 May 31;5(1):66. doi: 10.1038/s41746-022-00611-y. PMID: 35641814; https://pubmed.ncbi.nlm.nih.gov/35641814/>